Leadership Bytes for Coders

Goodbye bug squashing, hello people problems! Your guide to navigating the tech-to-leadership transition.

Welcome to AI 2.0

Created on 2025-10-24 13:55

Published on 2025-10-24 14:03

You know what’s keeping me up at night? It’s not that AI is getting smarter. It’s that we’ve been betting on the wrong horse. LLMs have plateaued. And the solution? It’s already here… we just hate admitting it.

Welcome to AI 2.0

Everyone’s waiting for GPT-6 to blow their minds. Bigger model. More parameters. The next evolution.

But that’s not where this is going.

The future of AI isn’t about making LLMs bigger. It’s about making them specialized. And it’s already happening.

GPTs have hit a wall (a lot faster than we thought).

Not a temporary wall. A structural one.

-

First, there’s the context window problem. You can only feed an LLM so much information before it starts losing the thread. Even with infinite computing power, this doesn’t go away. It’s baked into how these models work.

-

Second, intelligence itself has an upper limit. We saw massive gains from GPT-2 to GPT-3. Then GPT-4 came out with 10x more training data… and the improvement was marginal. You could train a model on every piece of information in the universe and it still wouldn’t be that much better.

So we’re stuck, right?

Not quite.

GPT-5 introduced something everyone hated at first. Under the hood, it started routing requests to other specialized models based on what it thought would give the best result. People complained it felt slower, clunkier.

But that’s the blueprint.

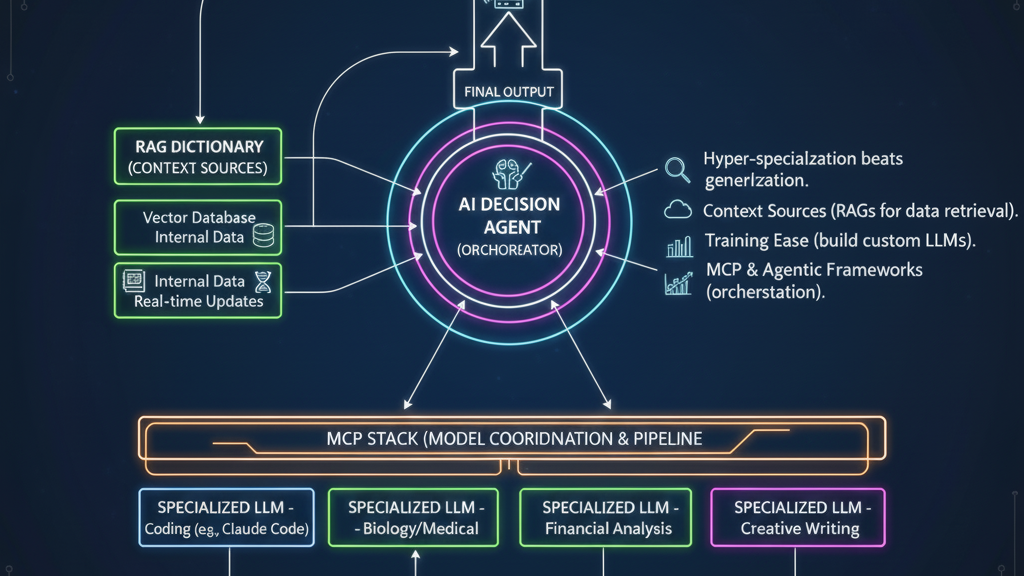

Add in agentic AI, RAGs and MCP, where outputs from one model become inputs for another, and you’ve got something entirely new. Call it AI 2.0.

LLMs aren’t getting better. We’re just learning how to use them differently.

Here’s what that looks like:

-

Hyper-specialization. Claude Code wasn’t trained on random internet noise. It was trained on billions of git repositories. Now it’s a legitimately solid coding companion. Google DeepMind and Yale trained a model on cell biology data and it started identifying novel factors in tumor detection. Specialization beats generalization every time.

-

Context sources. RAG was the first wave. Process your data into a vector database, hook it into an LLM, and suddenly you can search and synthesize information at scale. It’s not perfect, but it’s a start.

-

Training ease. New tools are making it possible for organizations to build their own LLMs. Train them on all your internal data so it’s actually accessible. Combine that with RAG for real-time updates, and you’ve got a system that doesn’t need constant retraining.

-

MCP and agentic frameworks. These are the scaffolding. They let you build AI decision agents that manage all the plumbing between models. Route requests, validate outputs, orchestrate workflows.

So what does this actually look like in practice?

-

At the top, you’ve got something like ChatGPT-6. It processes the incoming request, figures out what data sources it needs (from a centralized RAG dictionary), and decides which LLMs to use.

-

Then it hands off to an AI decision agent. The agent splits the request, pulls the data, and feeds it into the MCP stack.

-

From there, specialized LLMs do their thing. Results get stored in output memory.

-

The decision agent picks up those results. It can summarize them, pass them to other agents for validation, or run additional processing.

-

Once everything’s done, the decision agent hands the final output back to ChatGPT-6 and returns it to the user.

We will still run into context window limitations, and complex systems and large data sets are still going to be an issue (sometimes problems can’t be easily broken down and recombined). Also the output might be super wonky if the results are exceedingly large, so context compression and output chunking will be key.

It’s messy (and still prone to hallucinations). It’s orchestrated. And it’s the only way forward.

Because the era of “one model to rule them all” is over.

Welcome to AI 2.0.

#AI #MachineLearning #EnterpriseAI #TechLeadership #SoftwareDevelopment

// COMMENTS